Information | Free Full-Text | Machine Learning in Python: Main Developments and Technology Trends in Data Science, Machine Learning, and Artificial Intelligence

GitHub - IndicoDataSolutions/Foxhound: Scikit learn inspired library for gpu-accelerated machine learning

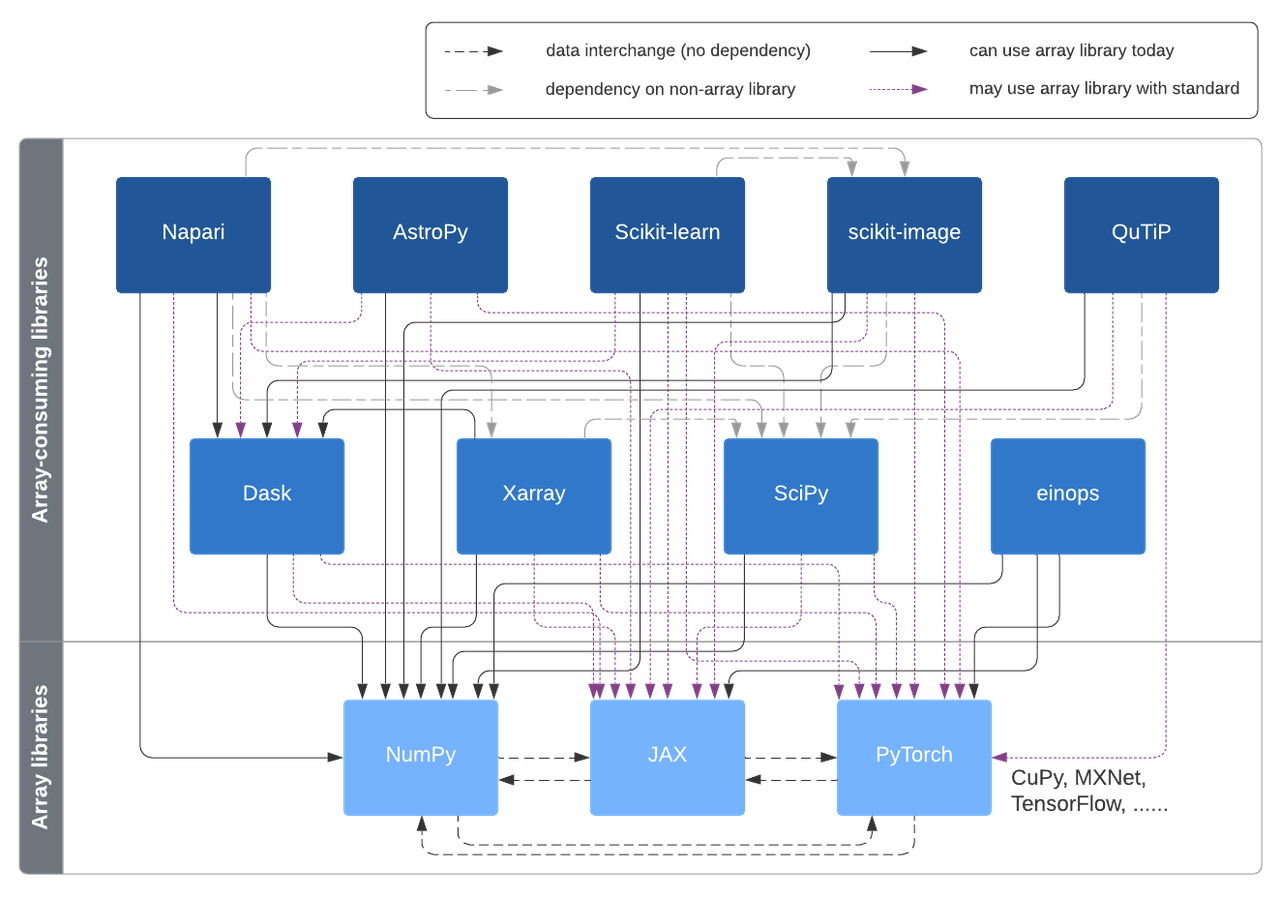

A vision for extensibility to GPU & distributed support for SciPy, scikit-learn, scikit-image and beyond | Quansight Labs

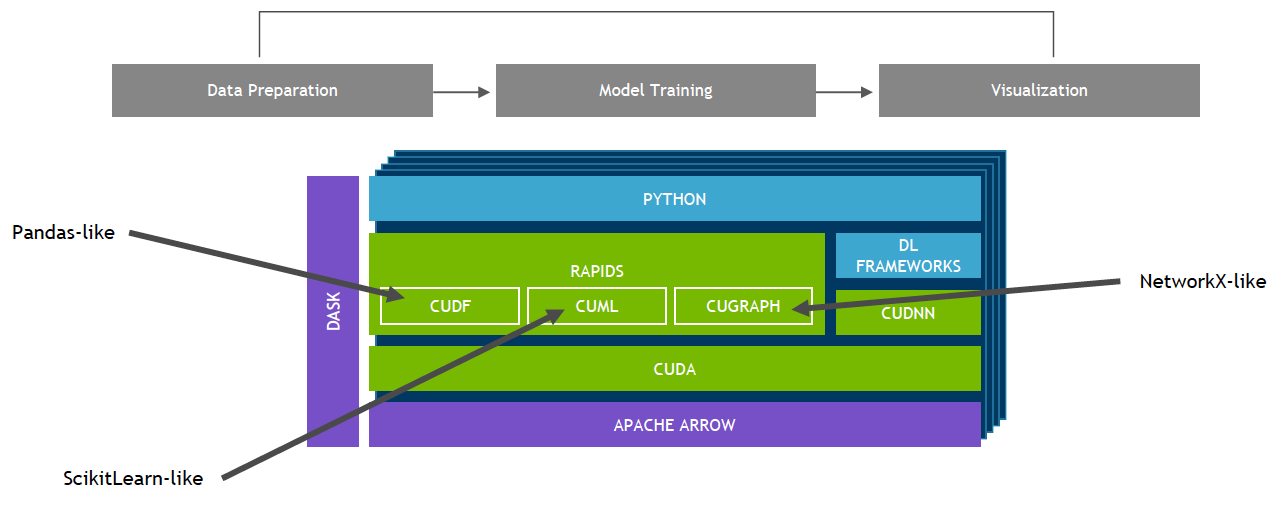

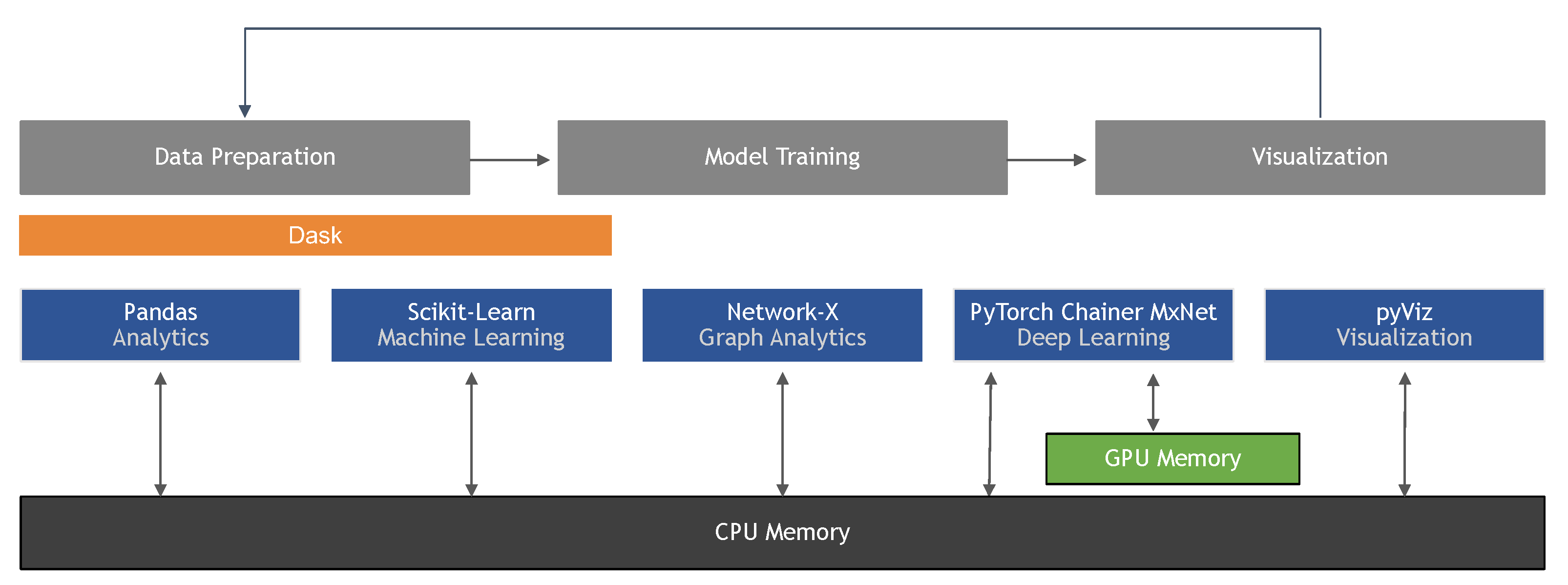

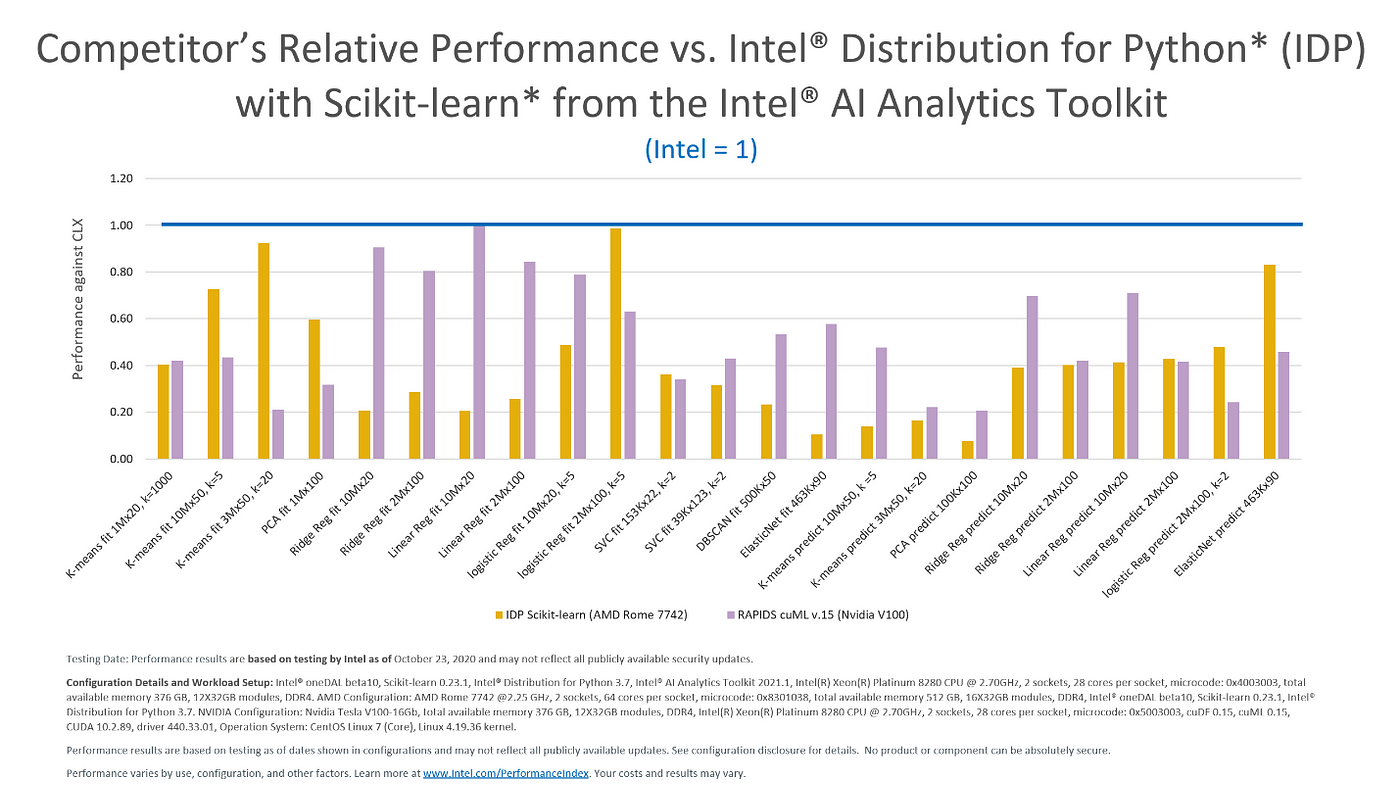

The Scikit-Learn Allows for Custom Estimators to Run on CPUs, GPUs and Multiple GPUs - Data Science of the Day - NVIDIA Developer Forums

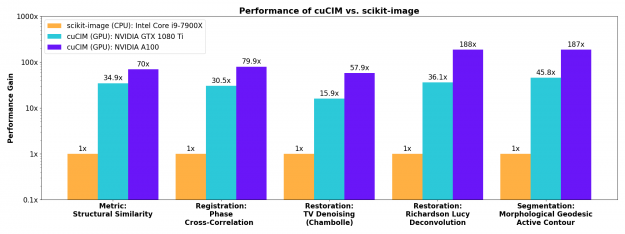

Accelerating Scikit-Image API with cuCIM: n-Dimensional Image Processing and I/O on GPUs | NVIDIA Technical Blog

Intel Gives Scikit-Learn the Performance Boost Data Scientists Need | by Rachel Oberman | Intel Analytics Software | Medium

GPU Acceleration, Rapid Releases, and Biomedical Examples for scikit-image - Chan Zuckerberg Initiative